【YOLOv11改进-卷积Conv】ACmix(Mixed Self-Attention and Convolution) :自注意力与卷积混合模型

【YOLOv11改进】ACmix(Mixed Self-Attention and Convolution) :自注意力与卷积混合模型

摘要

卷积和自注意力是两个强大的表示学习技术,通常被认为是彼此独立的两种同级方法。在本文中,我们展示了它们之间存在一种强有力的内在联系,从计算的角度来看,这两种范式的主要计算实际上是通过相同的操作完成的。具体来说,我们首先展示了传统的k×k卷积可以分解为k^2个1×1卷积,再加上位移和求和操作。然后,我们将自注意力模块中查询、键和值的投影解释为多个1×1卷积,再计算注意力权重并聚合值。因此,这两个模块的第一阶段包含了相似的操作。更重要的是,与第二阶段相比,第一阶段在计算复杂度上占据主导地位(通道数的平方)。这一观察自然引出了这两种看似不同的范式的优雅整合,即一种混合模型,它同时享有自注意力和卷积的优势(ACmix),并且相比纯卷积或自注意力方法具有最低的计算开销。大量实验表明,我们的模型在图像识别和下游任务中相较于竞争性基线始终取得了更好的结果。代码和预训练模型将发布在 https://github.com/Panxuran/ACmix 和 https://gitee.com/mindspore/models。

创新点

-

发现共同操作:ACmix揭示了自注意力和卷积之间存在强烈的基础关系,指出它们的大部分计算实际上使用相同的操作。通过将传统卷积分解为多个1×1卷积,并将自注意力模块中的查询、键和值的投影解释为多个1×1卷积,ACmix发现了这两种技术之间的共同操作。

-

阶段性计算复杂度:ACmix强调了自注意力和卷积模块中第一阶段的计算复杂度较高,这一观察自然地导致了这两种看似不同范式的优雅整合。通过最小化计算开销,ACmix实现了自注意力和卷积的有效融合。

-

轻量级移位和聚合:为了提高效率,ACmix采用深度卷积替代低效的张量移位操作,实现了轻量级的移位操作。这种创新的方法改善了模型的实际效率,同时保持了数据的局部性。

-

模块化设计:ACmix采用了模块化的设计,将自注意力和卷积技术结合在一起,同时保持了模块之间的独立性。这种设计使得ACmix能够充分利用两种技术的优势,同时避免了昂贵的重复投影操作。

文章链接

论文地址:论文地址

代码地址:代码地址

基本原理

ACmix是一种将自注意力(self-attention)和卷积(convolution)技术相结合的模型,旨在实现更高效的表示学习。

-

阶段I:

- 输入特征首先通过三个1×1卷积进行投影,然后被分成N个部分,每个部分包含3×N个特征图。

- 对于自注意力路径,将中间特征分成N组,每组包含三个特征片段,分别来自三个1×1卷积。这三个特征图分别用作查询(queries)、键(keys)和值(values),遵循传统的多头自注意力模块。

- 对于卷积路径,采用轻量级全连接层生成k^2个特征图。通过移位和聚合生成的特征图,以卷积方式处理输入特征,并从本地感受野中收集信息。

- 最后,两个路径的输出相加,其强度由两个可学习的标量控制:F_out = αF_att + βF_conv。

-

改进的移位和求和:

- 在卷积路径中,中间特征遵循传统卷积模块中进行的移位和求和操作。为了提高效率,采用固定卷积核的深度卷积来替代低效的张量移位操作。

- 通过深度卷积的固定卷积核,实现了轻量级的移位操作,避免了数据局部性的破坏,并更容易实现向量化。

-

阶段II:

- ACmix在第二阶段引入了额外的计算开销,包括一个轻量级全连接层和一个组卷积。这些计算复杂度与通道大小C成线性关系,相对于第一阶段来说较小。

- 通过轻量级全连接层和组卷积,实现了卷积路径中的特征生成和聚合,进一步提高了模型的灵活性和性能。

核心代码

import torch

import torch.nn as nn

import torch.nn.functional as F

import time

# 位置编码函数

def position(H, W, is_cuda=True):

# 根据是否使用 CUDA 设备生成横向和纵向的位置编码

if is_cuda:

loc_w = torch.linspace(-1.0, 1.0, W).cuda().unsqueeze(0).repeat(H, 1)

loc_h = torch.linspace(-1.0, 1.0, H).cuda().unsqueeze(1).repeat(1, W)

else:

loc_w = torch.linspace(-1.0, 1.0, W).unsqueeze(0).repeat(H, 1)

loc_h = torch.linspace(-1.0, 1.0, H).unsqueeze(1).repeat(1, W)

loc = torch.cat([loc_w.unsqueeze(0), loc_h.unsqueeze(0)], 0).unsqueeze(0)

return loc

# 步幅函数,按给定步幅下采样输入张量

def stride(x, stride):

b, c, h, w = x.shape

return x[:, :, ::stride, ::stride]

# 初始化张量值为 0.5

def init_rate_half(tensor):

if tensor is not None:

tensor.data.fill_(0.5)

# 初始化张量值为 0

def init_rate_0(tensor):

if tensor is not None:

tensor.data.fill_(0.)

# ACmix 模块类定义

class ACmix(nn.Module):

def __init__(self, in_planes, out_planes, kernel_att=7, head=4, kernel_conv=3, stride=1, dilation=1):

super(ACmix, self).__init__()

self.in_planes = in_planes

self.out_planes = out_planes

self.head = head

self.kernel_att = kernel_att

self.kernel_conv = kernel_conv

self.stride = stride

self.dilation = dilation

self.rate1 = torch.nn.Parameter(torch.Tensor(1))

self.rate2 = torch.nn.Parameter(torch.Tensor(1))

self.head_dim = self.out_planes // self.head

# 定义三个 1x1 卷积层,用于生成查询、键、值

self.conv1 = nn.Conv2d(in_planes, out_planes, kernel_size=1)

self.conv2 = nn.Conv2d(in_planes, out_planes, kernel_size=1)

self.conv3 = nn.Conv2d(in_planes, out_planes, kernel_size=1)

self.conv_p = nn.Conv2d(2, self.head_dim, kernel_size=1)

self.padding_att = (self.dilation * (self.kernel_att - 1) + 1) // 2

self.pad_att = torch.nn.ReflectionPad2d(self.padding_att)

self.unfold = nn.Unfold(kernel_size=self.kernel_att, padding=0, stride=self.stride)

self.softmax = torch.nn.Softmax(dim=1)

# 定义全连接层和深度卷积层

self.fc = nn.Conv2d(3*self.head, self.kernel_conv * self.kernel_conv, kernel_size=1, bias=False)

self.dep_conv = nn.Conv2d(self.kernel_conv * self.kernel_conv * self.head_dim, out_planes, kernel_size=self.kernel_conv, bias=True, groups=self.head_dim, padding=1, stride=stride)

self.reset_parameters()

def reset_parameters(self):

# 初始化参数

init_rate_half(self.rate1)

init_rate_half(self.rate2)

kernel = torch.zeros(self.kernel_conv * self.kernel_conv, self.kernel_conv, self.kernel_conv)

for i in range(self.kernel_conv * self.kernel_conv):

kernel[i, i//self.kernel_conv, i%self.kernel_conv] = 1.

kernel = kernel.squeeze(0).repeat(self.out_planes, 1, 1, 1)

self.dep_conv.weight = nn.Parameter(data=kernel, requires_grad=True)

self.dep_conv.bias = init_rate_0(self.dep_conv.bias)

def forward(self, x):

# 通过卷积层生成查询、键和值

q, k, v = self.conv1(x), self.conv2(x), self.conv3(x)

scaling = float(self.head_dim) ** -0.5

b, c, h, w = q.shape

h_out, w_out = h//self.stride, w//self.stride

# 位置编码

pe = self.conv_p(position(h, w, x.is_cuda).to(x.dtype))

q_att = q.view(b*self.head, self.head_dim, h, w) * scaling

k_att = k.view(b*self.head, self.head_dim, h, w)

v_att = v.view(b*self.head, self.head_dim, h, w)

if self.stride > 1:

q_att = stride(q_att, self.stride)

q_pe = stride(pe, self.stride)

else:

q_pe = pe

# 展开键和位置编码

unfold_k = self.unfold(self.pad_att(k_att)).view(b*self.head, self.head_dim, self.kernel_att*self.kernel_att, h_out, w_out)

unfold_rpe = self.unfold(self.pad_att(pe)).view(1, self.head_dim, self.kernel_att*self.kernel_att, h_out, w_out)

# 计算注意力权重

att = (q_att.unsqueeze(2)*(unfold_k + q_pe.unsqueeze(2) - unfold_rpe)).sum(1)

att = self.softmax(att)

# 应用注意力权重到值

out_att = self.unfold(self.pad_att(v_att)).view(b*self.head, self.head_dim, self.kernel_att*self.kernel_att, h_out, w_out)

out_att = (att.unsqueeze(1) * out_att).sum(2).view(b, self.out_planes, h_out, w_out)

# 卷积部分

f_all = self.fc(torch.cat([q.view(b, self.head, self.head_dim, h*w), k.view(b, self.head, self.head_dim, h*w), v.view(b, self.head, self.head_dim, h*w)], 1))

f_conv = f_all.permute(0, 2, 1, 3).reshape(x.shape[0], -1, x.shape[-2], x.shape[-1])

out_conv = self.dep_conv(f_conv)

# 返回注意力和卷积的加权结果

return self.rate1 * out_att + self.rate2 * out_conv

YOLOv11引入代码

在根目录下的ultralytics/nn/目录,新建一个attention目录,然后新建一个以 ACmix为文件名的py文件, 把代码拷贝进去。

请注意原代码运行后会报错,这里笔者修改了代码:

- 报错的原因是输入数据的类型(float)和偏置(bias)的类型(c10::Half)不一致。偏置通常是float类型,但在这个模型中,可能因为某些原因被设置为c10::Half(半精度浮点数),这会导致类型不匹配。

- 将张量转换为指定类型可以解决类型不匹配的问题。这样可以确保生成的坐标位置张量与模型中其他张量类型一致,避免类型冲突。通过 .to(type) 方法,可以将张量转换为指定的类型

- https://github.com/LeapLabTHU/ACmix/issues/21

- pe = self.conv_p(position(h, w, x.is_cuda))

修改为

pe = self.conv_p(position(h, w, x.is_cuda).to(x.dtype))

import torch

import torch.nn as nn

import torch.nn.functional as F

import time

def position(H, W, is_cuda=True):

if is_cuda:

loc_w = torch.linspace(-1.0, 1.0, W).cuda().unsqueeze(0).repeat(H, 1)

loc_h = torch.linspace(-1.0, 1.0, H).cuda().unsqueeze(1).repeat(1, W)

else:

loc_w = torch.linspace(-1.0, 1.0, W).unsqueeze(0).repeat(H, 1)

loc_h = torch.linspace(-1.0, 1.0, H).unsqueeze(1).repeat(1, W)

loc = torch.cat([loc_w.unsqueeze(0), loc_h.unsqueeze(0)], 0).unsqueeze(0)

return loc

def stride(x, stride):

b, c, h, w = x.shape

return x[:, :, ::stride, ::stride]

def init_rate_half(tensor):

if tensor is not None:

tensor.data.fill_(0.5)

def init_rate_0(tensor):

if tensor is not None:

tensor.data.fill_(0.)

class ACmix(nn.Module):

def __init__(self, in_planes, out_planes, kernel_att=7, head=4, kernel_conv=3, stride=1, dilation=1):

super(ACmix, self).__init__()

self.in_planes = in_planes

self.out_planes = out_planes

self.head = head

self.kernel_att = kernel_att

self.kernel_conv = kernel_conv

self.stride = stride

self.dilation = dilation

self.rate1 = torch.nn.Parameter(torch.Tensor(1))

self.rate2 = torch.nn.Parameter(torch.Tensor(1))

self.head_dim = self.out_planes // self.head

self.conv1 = nn.Conv2d(in_planes, out_planes, kernel_size=1)

self.conv2 = nn.Conv2d(in_planes, out_planes, kernel_size=1)

self.conv3 = nn.Conv2d(in_planes, out_planes, kernel_size=1)

self.conv_p = nn.Conv2d(2, self.head_dim, kernel_size=1)

self.padding_att = (self.dilation * (self.kernel_att - 1) + 1) // 2

self.pad_att = torch.nn.ReflectionPad2d(self.padding_att)

self.unfold = nn.Unfold(kernel_size=self.kernel_att, padding=0, stride=self.stride)

self.softmax = torch.nn.Softmax(dim=1)

self.fc = nn.Conv2d(3*self.head, self.kernel_conv * self.kernel_conv, kernel_size=1, bias=False)

self.dep_conv = nn.Conv2d(self.kernel_conv * self.kernel_conv * self.head_dim, out_planes, kernel_size=self.kernel_conv, bias=True, groups=self.head_dim, padding=1, stride=stride)

self.reset_parameters()

def reset_parameters(self):

init_rate_half(self.rate1)

init_rate_half(self.rate2)

kernel = torch.zeros(self.kernel_conv * self.kernel_conv, self.kernel_conv, self.kernel_conv)

for i in range(self.kernel_conv * self.kernel_conv):

kernel[i, i//self.kernel_conv, i%self.kernel_conv] = 1.

kernel = kernel.squeeze(0).repeat(self.out_planes, 1, 1, 1)

self.dep_conv.weight = nn.Parameter(data=kernel, requires_grad=True)

self.dep_conv.bias = init_rate_0(self.dep_conv.bias)

def forward(self, x):

q, k, v = self.conv1(x), self.conv2(x), self.conv3(x)

scaling = float(self.head_dim) ** -0.5

b, c, h, w = q.shape

h_out, w_out = h//self.stride, w//self.stride

# ### att

# ## positional encoding

pe = self.conv_p(position(h, w, x.is_cuda).to(x.dtype))

q_att = q.view(b*self.head, self.head_dim, h, w) * scaling

k_att = k.view(b*self.head, self.head_dim, h, w)

v_att = v.view(b*self.head, self.head_dim, h, w)

if self.stride > 1:

q_att = stride(q_att, self.stride)

q_pe = stride(pe, self.stride)

else:

q_pe = pe

unfold_k = self.unfold(self.pad_att(k_att)).view(b*self.head, self.head_dim, self.kernel_att*self.kernel_att, h_out, w_out) # b*head, head_dim, k_att^2, h_out, w_out

unfold_rpe = self.unfold(self.pad_att(pe)).view(1, self.head_dim, self.kernel_att*self.kernel_att, h_out, w_out) # 1, head_dim, k_att^2, h_out, w_out

att = (q_att.unsqueeze(2)*(unfold_k + q_pe.unsqueeze(2) - unfold_rpe)).sum(1) # (b*head, head_dim, 1, h_out, w_out) * (b*head, head_dim, k_att^2, h_out, w_out) -> (b*head, k_att^2, h_out, w_out)

att = self.softmax(att)

out_att = self.unfold(self.pad_att(v_att)).view(b*self.head, self.head_dim, self.kernel_att*self.kernel_att, h_out, w_out)

out_att = (att.unsqueeze(1) * out_att).sum(2).view(b, self.out_planes, h_out, w_out)

## conv

f_all = self.fc(torch.cat([q.view(b, self.head, self.head_dim, h*w), k.view(b, self.head, self.head_dim, h*w), v.view(b, self.head, self.head_dim, h*w)], 1))

f_conv = f_all.permute(0, 2, 1, 3).reshape(x.shape[0], -1, x.shape[-2], x.shape[-1])

out_conv = self.dep_conv(f_conv)

return self.rate1 * out_att + self.rate2 * out_conv

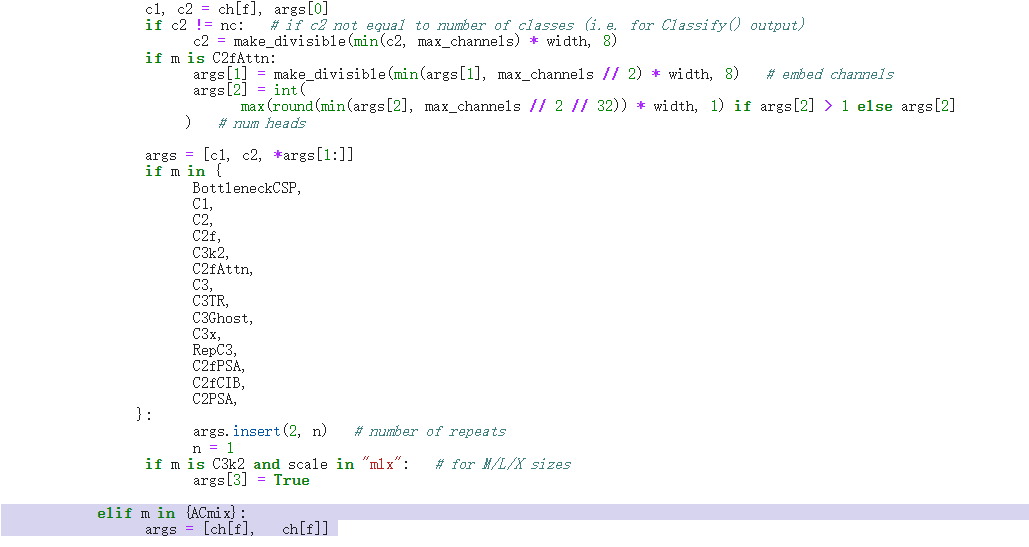

tasks注册

在ultralytics/nn/tasks.py中进行如下操作:

步骤1: 导入模块

from ultralytics.nn.attention.ACmix import ACmix

步骤2:注册

修改def parse_model(d, ch, verbose=True):

elif m in {ACmix}:

args = [ch[f], ch[f]]

配置yolo11-ACmix.yaml

ultralytics/cfg/models/11/yolo11-ACmix.yaml

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 8 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

# YOLO11n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 2, C3k2, [256, False, 0.25]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 2, C3k2, [512, False, 0.25]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 2, C3k2, [512, True]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 2, C3k2, [1024, True]]

- [-1, 1, SPPF, [1024, 5]] # 9

- [-1, 2, C2PSA, [1024]] # 10

# YOLO11n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 2, C3k2, [512, False]] # 13

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)

- [-1, 1, ACmix, []] #17

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 13], 1, Concat, [1]] # cat head P4

- [-1, 2, C3k2, [512, False]] # 19 (P4/16-medium)

- [-1, 1, ACmix, []] # 21

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 10], 1, Concat, [1]] # cat head P5

- [-1, 2, C3k2, [1024, True]] # 22 (P5/32-large)

- [-1, 1, ACmix, []] # 25

- [[17, 21, 25], 1, Detect, [nc]] # Detect(P3, P4, P5)

实验脚本

import warnings

warnings.filterwarnings('ignore')

from ultralytics import YOLO

if __name__ == '__main__':

# 修改为自己的配置文件地址

model = YOLO('/root/ultralytics-main/ultralytics/cfg/models/11/yolo11-ACmix.yaml')

# 修改为自己的数据集地址

model.train(data='/root/ultralytics-main/ultralytics/cfg/datasets/coco8.yaml',

cache=False,

imgsz=640,

epochs=10,

single_cls=False, # 是否是单类别检测

batch=8,

close_mosaic=10,

workers=0,

device='0',

optimizer='SGD',

amp=True,

project='runs/train',

name='ACmix',

)

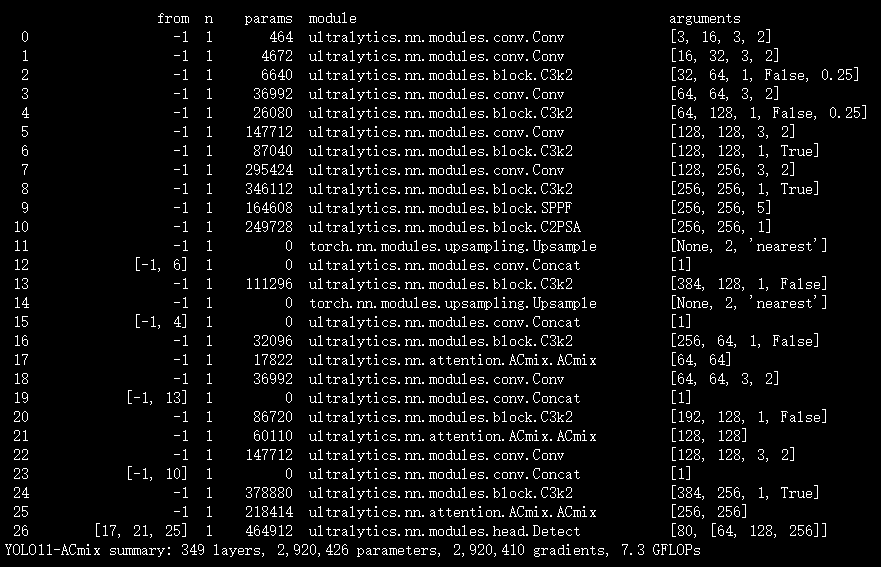

实验结果

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)